Summary - AI-powered content moderation revolutionizes online platforms. It swiftly identifies and filters inappropriate material, learns from interactions, ... and ensures a safe digital environment for users.

As someone who works closely with ‘‘AI powered content moderation,’’ I’ve witnessed firsthand its transformative impact on the digital space. At its core, AI content moderation is all about using machine learning to review and screen online content. For instance, automated content moderation steps in when we post a comment or an image on a website. Immediately, content filtering AI scans the material, ensuring it aligns with platform guidelines.

Moreover, the rise of AI moderation software has revolutionized the speed and efficiency of this process. Back then, human moderators had to go through each submission painstakingly. Now, AI-driven content review systems analyze vast amounts of data in seconds. It’s like having a vigilant watchdog that never sleeps.

One of the primary benefits of such systems is the breadth of content safety solutions they offer. AI content analysis examines it all, from textual data to images and videos, flagging potentially inappropriate or harmful content. This doesn’t just make the internet a safer place. It also gives businesses peace of mind. Instead of worrying about inappropriate material slipping through, they can use advanced content moderation tools to maintain their platform’s integrity.

But it’s not just about speed. Machine learning content moderation continuously learns and improves. Each interaction sharpens its understanding, refining its approach to content screening. This means fewer false positives and a smoother user experience.

Investing in AI solutions is a no-brainer for businesses seeking top-tier content moderation services. With the advent of text moderation AI, even the nuances of written content are preserved. So, whether you’re a budding startup or an established enterprise, incorporating AI strategies into your moderation processes is the way forward.

Content Moderation ensures online spaces are safe and respectful by filtering and reviewing user-generated contributions swiftly and effectively.

Why is AI Powered Content Moderation important?

-

AI’s Role

The emergence of artificial intelligence in the realm of content moderation has brought a paradigm shift. Instead of relying on traditional, manual methods, algorithms can now process, understand, and take action on vast amounts of data. It’s not just about removing content; it’s about understanding context, nuance, and intent. AI also has made its presence felt in social media content moderation.

-

Efficiency

The speed and accuracy of AI can’t be matched by human moderators alone. With AI, online platforms can instantly review, flag, or block inappropriate content, ensuring users have a consistent and positive experience.

-

Analysis Depth

Unlike simplistic filters that might just search for keywords, AI delves into the deeper meaning of content. It can analyze imagery, detect subtle nuances in text, and even understand video content. This ensures that innocent posts aren’t accidentally flagged while genuinely inappropriate content doesn’t slip through.

-

Immediate Action

One of the standout benefits of AI is its ability to operate in real-time. When a user uploads a post, image, or video, the AI system can immediately assess and decide on it, ensuring harmful content doesn’t linger his instantaneous processing allows platforms to enforce their content moderation policies at scale, identifying violations of safety guidelines.

-

Ever-Evolving

Machine learning, a subset of AI, allows the system to learn from its actions. If it mistakenly blocks appropriate content or allows harmful content, feedback mechanisms can teach it to improve, ensuring fewer errors in the future.

-

Variety of Tools

AI’sAI’s applications in content moderation are diverse. For written posts, some algorithms can understand sentiment and context. For images or videos, visual recognition software can detect inappropriate imagery. The landscape of AI tools ensures all types of content are screened effectively.

-

Necessity in the Digital Age

As the internet continues to grow, so does the amount of content. Humans alone can’t keep up with moderating everything. AI assists in managing the sheer volume, ensuring online platforms remain safe spaces for users. This is how AI content moderation is changing social media.

-

Quality Assurance

Online platform reputation is paramount. With AI moderation, platform owners can assure users that the content they interact with meets a certain quality and safety standard. This not only enhances user trust but also encourages more engagement.

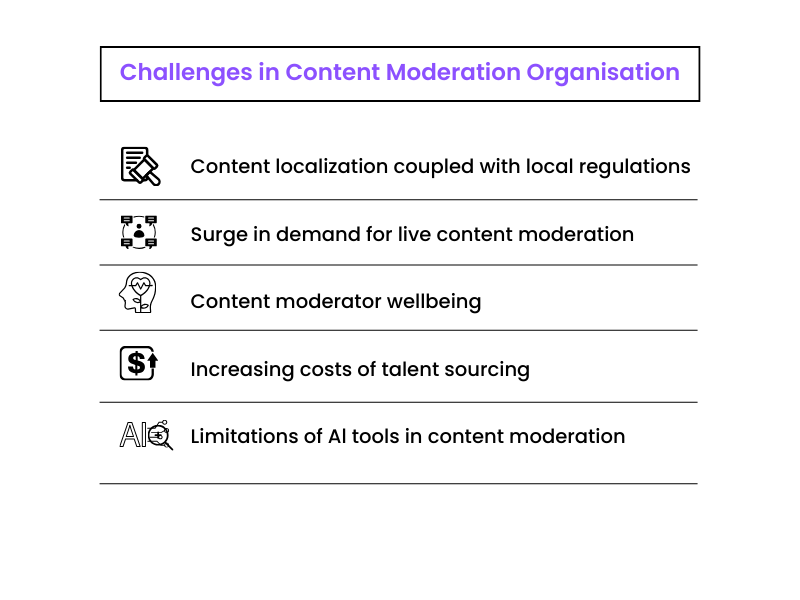

Challenges Of Content Moderation: A Personal Insight

- Firstly, defining appropriate content is challenging. Cultural and individual values vary, so what’s acceptable to one person might be offensive to another.

- Secondly, the volume of content I handle daily is overwhelming. Every minute, users upload tons of posts, photos, and videos.

- Next, automating content moderation process has its pitfalls. While technology helps, it often misinterprets context or nuances.

- Also, the line between censorship and moderation is thin. I constantly strive to uphold free speech without enabling harmful behavior.

- Furthermore, the emotional toll is real. I sometimes come across disturbing or harmful content, which can be mentally taxing.

- Additionally, backlash from users is frequent. Some feel their voices are being stifled when their content gets flagged.

- Lastly, consistency is a challenge. Ensuring all moderators on the team apply the same rules to every piece of content is hard.

Data Collection is the backbone of insightful decision-making, gathering relevant information systematically to drive innovations and cater to user preferences.

AI: Revolutionizing Content Moderation

AI has made significant strides in content moderation. Here are some prominent use cases:

Text Moderation

AI scans textual content, such as comments, reviews, or posts, for inappropriate language, hate speech, or spam. Understanding context and sentiment allows AI to discern between harmless and harmful content. This is also how AI improves content moderation on dating platforms.

Image and Video Moderation

AI can detect explicit content, violent imagery, or other predefined inappropriate visual materials in photos and videos using image recognition algorithms. This is especially useful on platforms where users upload vast amounts of visual content.

Live Streaming Moderation

With the rise of live broadcasts on social platforms, moderating in real-time has become essential. AI can monitor live streams to flag or block content that violates community guidelines.

Behavior Analysis

Beyond just content, AI can analyze user behavior. By monitoring patterns, AI can predict and identify users likely to post harmful content, thus taking preventative measures.

Fake News and Misinformation Detection

AI can cross-reference information with trusted sources to identify potential misinformation or fake news articles, thereby maintaining platform credibility.

Advertisement Moderation

Platforms that serve ads can use AI to ensure advertisements adhere to community and quality guidelines, protecting users from misleading or harmful ads. Content moderation for ads campaigns and apps is important in these cases.

Contextual Understanding

AI can discern the context in which content is presented. For example, a word might be harmless in one context but offensive in another. AI can understand these nuances to make informed moderation decisions.

Feedback Loop and Learning

The system learns and refines its processes as users provide feedback on AI’s moderation decisions. This continuous improvement ensures the moderation system stays updated with evolving content trends and community standards.

Multilingual Content Moderation

With language processing capabilities, AI can moderate content in multiple languages, making it invaluable for global platforms.

Content Categorization and Tagging

Beyond just blocking or allowing, AI can categorize and tag content based on its nature. This helps in better content organization and recommendations for users. It can also help in identifying the different types of data classification.

Frequently Asked Questions

1. What are the disadvantages of AI content moderation?

- Lack of Nuance: AI can sometimes miss the context or cultural nuances.

- False Positives/Negatives: AI might mistakenly allow inappropriate content or block legitimate content.

- Over-reliance: Solely depending on AI can lead to overlooking certain harmful content.

- Ethical Concerns: Decisions made by AI regarding what’s appropriate can raise moral and freedom of speech concerns.

2. How does AI-powered content moderation handle multilingual content?

AI systems often have natural language processing capabilities that allow them to understand and moderate content in multiple languages. They can be trained on diverse datasets to ensure effective moderation across various languages.

3. Is AI content moderation suitable for all platforms, irrespective of their size?

Yes, AI content moderation can be scaled to fit platforms of all sizes, from niche community forums to major social media networks. The scalability of AI solutions makes them suitable for diverse applications.

4. How do users know if the content is being moderated by AI or humans?

Platforms may not explicitly inform users whether content is being moderated by AI or humans. However, some platforms may choose to be transparent about their moderation processes in their terms of service or community guidelines.

- What is Satellite Imagery? Tap the Power of Seeing from Above - June 12, 2025

- A Deep Dive into the Meaning of Handwritten Text Recognition with OCR - February 27, 2025

- How Text Annotation Adds Depth to Reading - April 4, 2024