LiDAR technology is at the core of modern AI perception systems. According to recent industry reports, the global LiDAR market is projected to surpass $3.5 billion by 2030, driven largely by demand from autonomous vehicles and robotics. As this technology grows, so does the need for high-quality LiDAR data annotation.

Two of the most widely used techniques today are point cloud annotation vs 3D Cuboid. Each method has unique strengths, and choosing the right one can directly impact how well your AI model performs in the real world.

In this guide, we break down both techniques, compare their use cases, and help you decide which approach fits your project best.

Key Takeaways for Point Cloud Annotation vs 3D Cuboid

- Point cloud annotation labels individual data points in a 3D space for high-precision AI tasks.

- 3D cuboid draws bounding boxes around objects, making it faster and more scalable.

- Point cloud annotation works best for semantic segmentation, HD map creation, and complex terrain data.

- 3D cuboid labeling is ideal for object detection tasks in autonomous driving and robotics.

- Both techniques can be used together for more comprehensive LiDAR-based AI models.

What Is Point Cloud Annotation?

A point cloud is a collection of millions of individual data points captured by LiDAR sensors. Each point carries information about its position in 3D space, along with attributes like intensity or color. Point cloud annotation is the process of labeling these individual points to help AI models understand the structure of a scene.

This technique is especially useful when you need precise, object-level detail. For example, it can distinguish the exact edges of a building, the surface of a road, or even low-lying vegetation in a single scan.

How Does It Work?

- LiDAR sensors emit laser pulses that bounce off surfaces and return as point data.

- Annotators classify each point or group of points into categories like road, vehicle, pedestrian, or building.

- The result is a richly labeled 3D environment that AI models can use for spatial reasoning.

Point cloud annotation supports tasks like semantic segmentation, instance segmentation, and 3D object detection. It is one of the most powerful tools to annotate complex 3D scenes where standard data approaches fall short.

What Is 3D Cuboid Annotation?

3D cuboid, also called 3D bounding box annotation, involves placing a three-dimensional rectangular box around an object in a LiDAR scan. Unlike point cloud annotation, this method does not label every point. Instead, it focuses on defining the position, size, and orientation of objects within the scene.

This approach is widely used in autonomous driving annotation because it allows AI systems to quickly identify and track objects like cars, trucks, and pedestrians in real time.

How Does It Work?

- Annotators place a 3D box around each object of interest in the point cloud.

- Each box captures the object’s length, width, height, and rotational angle.

- The labeled data trains AI models to detect and track objects in 3D space.

3D cuboid is a faster technique compared to full point cloud annotation. It works well when you need to annotate large datasets quickly without sacrificing object-level accuracy.

The annotated data produced by this method serves directly as training data for object detection models.

Platforms like AWS SageMaker Ground Truth support LiDAR point cloud annotation and 3D bounding box workflows, making professional-grade 3D cuboid annotation services accessible to enterprise teams of all sizes.

Point Cloud Annotation vs 3D Cuboid: A Side-by-Side Comparison

Here is a quick overview of how the two techniques stack up against each other:

| Factor | Point Cloud Annotation | 3D Cuboid |

| Detail Level | Precise per-point labeling | Object-level bounding box |

| Best For | Dense, complex scenes | Fast object detection |

| Accuracy | Very high | Moderate to high |

| Processing Time | Slower | Faster |

| Use Case | Semantic segmentation, HD mapping | Autonomous driving, robotics |

| Data Output | Segmented point cloud | Labeled 3D bounding boxes |

| Tool Complexity | High | Moderate |

| AI Model Type | Segmentation, classification models | Object detection models |

As the table shows, there is no universal winner. The best method depends on your project goals, the type of LiDAR data you are working with, and the AI model you are training.

Key Differences Between the Two Techniques

1. Level of Detail

Point cloud annotation captures fine-grained spatial detail at the individual point level, working with three-dimensional data at a granularity that no other technique can match. This makes it the right choice when annotation accuracy matters more than speed.

3D cuboid, on the other hand, captures object boundaries without labeling every single point. When comparing 3D cuboid vs point-wise annotation accuracy, point cloud methods consistently win on precision, while cuboid methods win on throughput.

2. Speed and Scalability of Point Cloud Annotation vs 3D Cuboid

When teams work with large volumes of LiDAR data, a 3D cuboid is significantly faster. Annotators can label objects with bounding boxes in a fraction of the time it takes to perform full point-level segmentation. This makes it more practical for high-volume annotation workflows.

3. Accuracy for AI Models

For AI tasks that require understanding exact object shapes and spatial relationships, such as 3D point cloud data annotation for HD maps or terrain models, point cloud methods deliver superior accuracy.

Point cloud semantic segmentation annotation in particular produces high-quality point cloud outputs that significantly improve annotation results for scene understanding tasks.

For straightforward object detection models, a 3D cuboid provides enough detail to train reliable systems.

4. Annotation Workflow Complexity

Point cloud annotation requires more skilled annotation teams and longer timelines. Managing annotation quality also takes more effort, especially when working with lidar scans that contain millions of points. 3D cuboid workflows are easier to standardize and scale.

When data labeling needs to move fast, the annotation process becomes significantly simpler with cuboid-based methods. A well-designed 3D cuboid workflow streamlines the annotation process and reduces turnaround time without compromising on output quality.

When to Use Point Cloud Annotation?

Point cloud annotation is the right choice when your AI model needs to understand the full geometry of a scene. Below are the most common use cases:

- HD map creation for autonomous vehicles where every road marking, lane boundary, and surface detail matters.

- Aerial and drone-based LiDAR scans where terrain features like slopes, trees, and structures need precise classification.

- Construction site monitoring, where point density maps are used to measure changes over time.

- Agricultural surveys where plant health and soil analysis depend on fine-grained 3D surface data.

- Geospatial annotation services projects that require detailed environmental modeling.

If you are working on a project where annotation quality is critical and your budget allows for longer timelines, point cloud annotation capabilities will give your AI model the precise labeled point clouds it needs.

This is especially true for machine learning pipelines that depend on 3D LiDAR annotation and cannot afford gaps in spatial accuracy.

When to Use 3D Cuboid Annotation?

A 3D cuboid is the better option when you need fast, scalable labeling for well-defined objects in 3D space. Here are scenarios where it performs best:

- Autonomous driving datasets where vehicles, pedestrians, and cyclists need to be detected in real time.

- Warehouse and logistics robotics, where AI systems pick up and move objects.

- Traffic management systems that track vehicle movement across intersections.

- Security and surveillance AI that monitors people and objects in outdoor environments.

- Any use case where object detection models need a 3D bounding box as a training input.

3D cuboid for autonomous driving is one of the most common applications in the industry. It balances annotation speed with enough spatial accuracy to train reliable detection models.

For teams learning how to annotate point clouds with cuboids, the process is more intuitive than full point-level segmentation, and advanced annotation platforms now make it even faster with semi-automated tools.

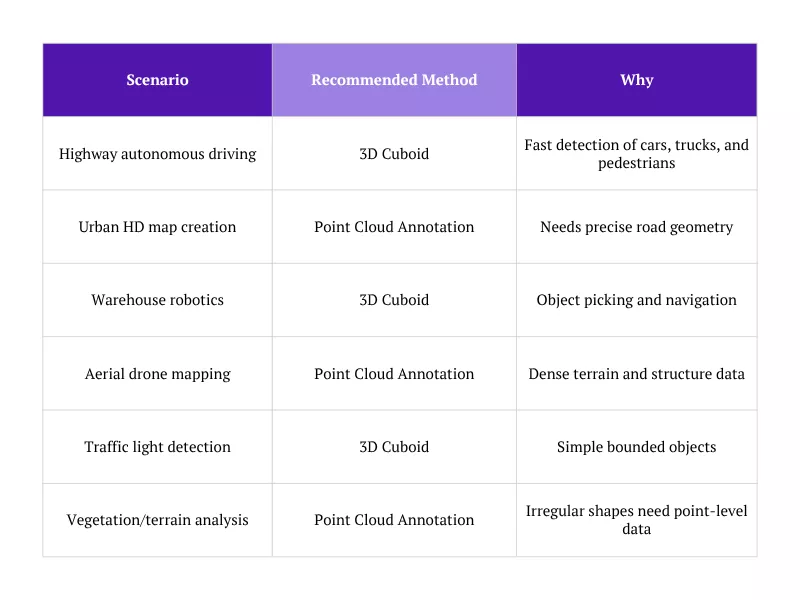

Choosing the Right Method: Scenario Guide

When comparing point cloud vs 3D bounding box annotation services for fast-moving object detection tasks, cuboid annotation is better suited for production-scale workflows.

Can You Use Both Together?

Yes, and in many advanced AI pipelines, teams use both annotation methods in combination. For example, a self-driving car system might use a 3D cuboid to detect and classify objects on the road, while also using point cloud annotation to generate precise HD maps of the surrounding environment.

This hybrid approach gives AI models the best of both worlds: fast, scalable object detection through 3D cuboid, and rich spatial context through point cloud annotation. Many data annotation services now offer both techniques as part of a full LiDAR annotation package.

How Do Teams Combine Both Methods?

- Use a 3D cuboid for dynamic objects like vehicles and pedestrians.

- Use point cloud annotation for static infrastructure like roads, signs, and buildings.

- Merge both annotation types into a unified dataset for model training.

- Apply quality checks at each stage to ensure consistent annotation accuracy.

Best Practices for High-Quality LiDAR Annotation

Regardless of which method you choose, annotation quality directly affects model performance. Here are some practices that experienced annotation teams follow:

For Point Cloud Annotation

- Define clear labeling guidelines for each object category before the project starts.

- Use multi-pass review workflows where different annotators check each other’s work.

- Adjust point density settings based on object size to avoid under-annotation of small objects.

- Use 3D visualization tools to inspect annotated point clouds from multiple angles.

For 3D Cuboid

-

- Train annotators to align cuboid orientation precisely with the object’s heading direction.

- Set minimum confidence thresholds to flag ambiguous annotations for review.

- Use consistent box dimensions for recurring object types like standard vehicles.

- Apply automated pre-labeling to speed up the process and reduce manual effort.

LiDAR Data Annotation Techniques: A Broader View

- Semantic Segmentation: Assigns a category label to every point in the cloud. Semantic segmentation point cloud workflows are among the most demanding forms of 3D point cloud annotation, but they deliver the richest scene understanding for AI models.

- Instance Segmentation: Labels individual objects within the same category separately, which is useful when multiple objects of the same type appear together.

- Panoptic Segmentation: Combines semantic and instance segmentation for a complete understanding of both background and foreground elements.

- Polygon Annotation: Polygon annotation is a tool to annotate complex object boundaries in 2D slices of point cloud data, often used alongside 3D methods for a complete view.

Understanding these various annotation types helps teams build workflows that match their AI model requirements. The ability to annotate 3D environments accurately is what separates good LiDAR AI systems from great ones.

Advanced features like 3D perception modeling, within a 3D scene context, and multi-class labeling are now standard in modern annotation platforms.

These features help produce better 3D models and more reliable outputs across every downstream task.

Final Verdict

There is no single right answer to this question. The decision depends on what your AI model needs to do.

Choose point cloud annotation when your model needs to understand the precise shape and structure of a 3D environment. It is the better choice for HD mapping, geospatial analysis, terrain classification, and any task where annotation accuracy is the top priority.

Choose a 3D cuboid when your model needs to detect and track objects quickly and efficiently. It is the better choice for autonomous driving datasets, robotics applications, and any task where annotation speed and scalability matter most.

And if your project demands the best of both approaches, a hybrid annotation strategy that combines point cloud and 3D cuboid techniques can give your AI model a competitive edge. The debate of 3D bounding box vs point cloud comes down to what your model actually needs to do.

As datasets grow larger and model requirements become more complex, annotation becomes less about choosing one method and more about knowing when to use each. Understanding 3D cuboid vs point-wise annotation accuracy trade-offs is what helps experienced teams make that call confidently.

Working with an experienced data annotation services provider can help you choose the right method, maintain consistent annotation quality, and scale your data labeling workflow.

Frequently Asked Questions

What is the main difference between point cloud annotation and 3D cuboid?

Point cloud annotation labels every individual data point in a LiDAR scan, giving AI models a detailed, point-level understanding of the environment. 3D cuboid places a three-dimensional bounding box around objects, capturing their position, size, and orientation without labeling each point. In short, point cloud annotation offers more granular data, while a 3D cuboid is faster and better suited for object detection tasks.

When should I use point cloud annotation over 3D cuboid?

Use point cloud annotation when your AI model needs to understand fine-grained spatial detail. It is the right choice for HD map creation, terrain analysis, geospatial annotation, and semantic segmentation tasks. If your project involves irregular shapes, dense environments, or requires precise boundary detection, point cloud annotation will deliver better results than 3D cuboid labeling.

Which is more accurate for autonomous vehicles: point cloud or 3D cuboid?

It depends on the task. For detecting and tracking dynamic objects like vehicles and pedestrians, a 3D cuboid is accurate enough and significantly faster to produce at scale. For building HD maps or understanding the precise geometry of the road environment, point cloud annotation is more accurate. Most production-level autonomous driving pipelines use both methods together to cover all perception needs.

Can point cloud annotation and 3D cuboid be used together?

Yes, and this is a common approach in advanced LiDAR-based AI systems. Teams often use a 3D cuboid for moving objects and point cloud annotation for static scene elements. Combining both gives AI models a complete picture of their environment, with object-level detection and scene-level spatial awareness working together in the same dataset.

Which technique is best for AI data annotation in robotics?

For most robotics applications, a 3D cuboid is the preferred starting point. Robots typically need to detect, locate, and interact with specific objects, such as boxes in a warehouse or components on an assembly line. 3D bounding boxes give robotic AI models the spatial data they need to navigate and manipulate objects effectively. However, for robots operating in complex or unstructured environments, point cloud annotation may be needed to handle irregular surfaces and fine spatial detail.