Summary - Content moderation tools are software that helps websites and businesses check and control what users post online.... They use special techs like AI and ML to spot and remove unwanted or harmful content.

When you post, comment, or share something online, you’re adding to the huge amount of digital stuff out there.

But with this boon of online expression comes the challenge of filtering out inappropriate or harmful material.

Enter Automated Content Moderation tools. Let’s dive deep into their significance.

Why is Content Moderation Important?

Digital platforms today are teeming with user-generated content. The online space buzzes with conversations, from short tweets to long-form articles. However, not all of it is constructive. Some content can be inappropriate, misleading, or outright harmful. Here, content moderation comes into play.

Defining Sensitive Content

Sensitive content could range from explicit material and hate speech to misinformation. Bad content can upset people, especially kids, and hurt a company’s good name. Keeping the online world safe is important for everyone’s safety and for companies to be seen in a good light.

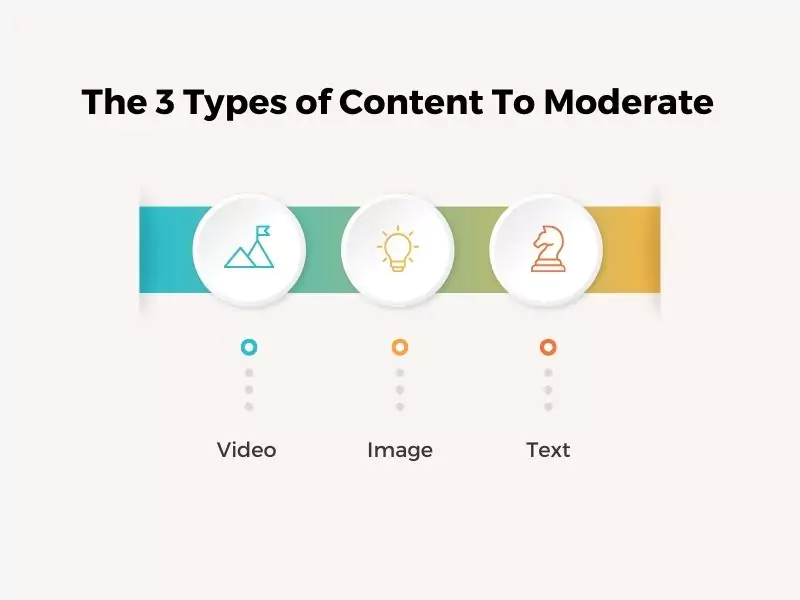

The 3 Types of Content To Moderate

Video

Video content has surged in popularity. However, issues like deep fakes (manipulated videos that seem real) and explicit material require robust video moderation software.

Image

A picture is worth a thousand words, but what if those words spread hate or misinformation? Detecting harmful imagery, given the nuances in interpretation, is a monumental task performed by hate speech detection tools.

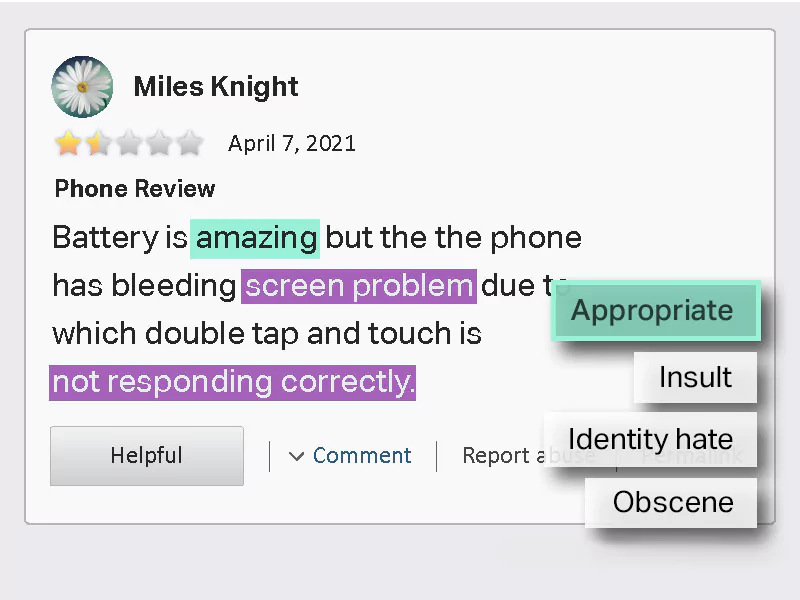

Text

Written content, spanning blogs, comments, reviews, etc., can contain hate speech, false information, and other potential triggers. Detecting and moderating such content via comment moderation tools is crucial.

Methods of Content Moderation

1. Pre-moderation

Pre-moderation is akin to a filtering process of scrutinizing all content before it is published or shared online. By adopting this approach, companies can ensure that only content aligning with their guidelines and standards is released.

These content safety solutions are particularly effective for brands wanting to maintain a squeaky-clean online image. However, the downside is that it might slow down the publishing process, and there’s the potential of stifling real-time user engagement.

It helps in avoiding automating moderation challenges.

2. Post-moderation

In the post-moderation approach, content gets uploaded first and reviewed afterwards. It ensures real-time interaction and immediate content sharing, enhancing user experience. However, there’s a small window where inappropriate content might be accessible to users before it gets flagged.

This method is suitable for platforms with high user engagement, where immediate content posting is integral to the platform’s vibrancy. However, there’s still a keen eye on maintaining community guidelines and brand protection solutions.

3. Reactive Moderation

Reactive moderation places trust in the platform’s community. Here, rather than the platform actively moderating content, it relies on its users to report any content they find offensive or inappropriate. Once flagged, they review the content and take appropriate actions.

It’s a democratic approach to online reputation management tools that leverages the collective vigilance of the user base. Platforms with strong, engaged communities often find this method effective, as users invest themselves in keeping the platform’s content clean and relevant.

4. Automated Moderation

In an age of technological marvels, automated moderation is the front-runner in harnessing the prowess of artificial intelligence. The content compliance software is designed to rapidly scan, recognize, and act upon vast amounts of content in real-time. They can detect and handle inappropriate content efficiently using algorithms and predefined criteria.

This method is especially valuable for platforms with massive daily content uploads, where manual moderation would be impractical. However, keeping these systems updated and calibrated is essential to avoid false positives and ensure they evolve with changing content trends.

Each moderation method comes with its strengths and potential pitfalls for user-generated content control strategies. The right choice often depends on the platform’s nature, its user base, and the specific challenges it faces in terms of content management.

Top 5 Content Moderation Tools

1. Hive Moderation

Hive Moderation is more than just a standard moderation tool. Designed with user experience in mind, Hive offers several unique features focused on meeting the needs of various industries, from social media platforms to e-commerce websites.

Its robust algorithms of child-safe content filters can detect and manage inappropriate content, ensuring platforms remain safe and user-friendly.

Hive’s easy-to-use dashboard allows businesses to customize their moderation criteria, ensuring their brand’s image remains untarnished.

2. Amazon Rekognition

A product of Amazon’s vast technological ecosystem, Amazon Rekognition is a force to be reckoned with in the content flagging systems.

It offers unparalleled content scanning capabilities by harnessing the power of Amazon’s advanced AI.

It’s exceptionally proficient in image and video analysis, identifying objects, people, text, and scenes, and even detecting any inappropriate content. Given its robust feature set and scalability, it’s a favorite among large enterprises aiming to maintain content integrity across their platforms.

3. WebPurify

WebPurify is a versatile, integration-friendly moderation tool that operates seamlessly across various platforms. Whether you’re moderating text, images, or videos, WebPurify offers specialized solutions to keep your platform’s content in check.

Their extensive API documentation and support mean businesses can integrate WebPurify’s services effortlessly, irrespective of the platform they are on.

Moreover, with its human moderation services, WebPurify ensures a balanced approach to content scanning, mixing AI efficiency with human judgment.

4. Sightengine

In the world of real-time content analysis software, Sightengine stands out. It’s renowned for its lightning-fast content checks, ensuring inappropriate content gets flagged and handled instantly.

This efficiency is particularly vital for platforms with massive user-generated content influxes, where timely moderation creates the difference between a thriving community and a toxic environment. This applies to dating sites as well as social media platforms.

Sightengine’s sophisticated AI can handle various content forms, from videos and images to live streaming, making it a versatile choice for businesses of all types.

5. Alibaba Cloud

Though newer to the scene than some competitors, Alibaba Cloud’s content moderation tools are quickly setting new benchmarks in the industry. A product of Alibaba Group, it comes packed with cutting-edge features and capabilities.

Given its cloud-based architecture, it offers scalability, making it a suitable choice for both budding startups and established giants.

With its focus on using AI to detect harmful content, from spam to explicit material, Alibaba Cloud is committed to creating a safer online environment for all.

While choosing content policy enforcement tools will depend on specific business needs and objectives, these five tools set the gold standard in today’s digital landscape.

Their best practices of content moderation with advanced capabilities and commitment to online safety make them essential assets for any brand aiming to foster a healthy online community.

Challenges in Content Moderation

Striking a balance between content control and freedom of expression is an ongoing debate. As technology advances, staying updated becomes a challenge.

With automated systems, there’s also the ethical question of potential biases and the need for transparency.

Conclusion

The online world is vast and filled with a mixture of enlightening and potentially harmful content. Ensuring a safe space becomes paramount as we increasingly rely on digital platforms for communication, learning, and entertainment.

Whether you’re a brand, content creator, or user, understanding and valuing Content Moderation Service for Children’s Websites is the way forward.

Frequently Asked Questions

1. What are content moderation tools?

Content moderation tools are software or services designed to monitor, analyze, and manage user-generated content on online platforms, ensuring that the content aligns with community guidelines and standards.

2. Why are content moderation tools important for online platforms?

Content moderation tools help maintain a safe and trustworthy online environment by filtering out inappropriate, harmful, or misleading content, thus enhancing user experience and protecting a platform’s reputation.

3. How does automated content moderation work?

Automated content moderation utilizes artificial intelligence (AI) and machine learning algorithms to rapidly scan, recognize, and moderate vast amounts of content in real time based on predefined criteria.

4. Are content moderation tools foolproof?

While they are highly effective, no tool is 100% foolproof. There might be occasional false positives or overlooked content. Hence, a combination of automated tools and human oversight is often recommended.

5. How does Hive Moderation stand out among other tools?

Hive Moderation offers unique features catering to various industries, making it versatile. Its advanced algorithms and customization options ensure precise content filtering based on specific needs.

6. Can content moderation tools detect both text and visual content?

Many advanced content moderation tools, like Amazon Rekognition, can detect inappropriate content in text, images, and videos, ensuring comprehensive moderation across all content types.

7. Are there any challenges in using automated moderation tools?

While they offer speed and scalability, automated tools might sometimes misinterpret context or cultural nuances. Regular updates, calibrations, and human review can help address these challenges.

- Comparing Manual vs Automated Image Annotation: Which Is Better in 2026? - December 29, 2025

- How to Master Audio Data Labeling for AI Accuracy in 2026 - November 18, 2025

- The Importance of Data Security in E Commerce Audio Annotation - October 30, 2025