As artificial intelligence (AI) continues to transform various industries, the importance of data in driving AI success cannot be overstated. However, for AI models to achieve high accuracy and reliability, they must be able to handle data edge cases effectively.

This article will explain the concept of data edge cases and explore their impact on AI performance. We will also provide strategies for solving data edge cases and highlight their significance in driving AI success.

The Importance Of Data In AI

Data is the foundation of AI. AI models are only as good as the data they are trained on. Machine learning algorithms learn from data, and that data’s quality and quantity directly impact the resulting model’s performance.

Inaccurate or incomplete data can lead to biased or unreliable models, while a lack of data can prevent models from learning effectively. As such, high-quality and diverse data is critical for achieving AI success.

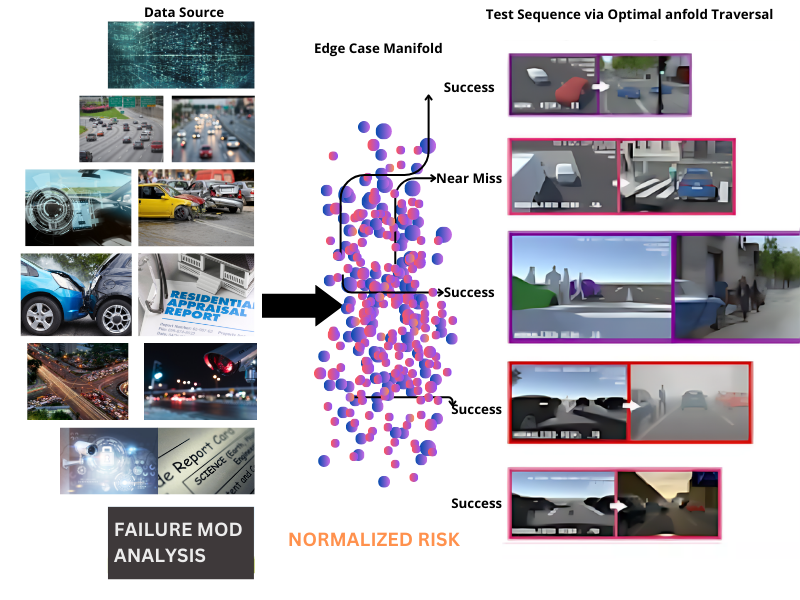

However, data is not always straightforward, and ensuring that AI models can handle all the data they encounter in the real world can be challenging. Such are known as edge cases and must be addressed to ensure the accuracy and robustness of the machine learning models. Failure to handle edge cases can lead to poor generalization of new and unexpected data, severely impacting real-world performance. To effectively tackle these hurdles, developers are increasingly turning to innovative data generation techniques. One of the most powerful solutions in this space is the use of Synthetic Data in Computer Vision Annotation.

In high-stakes applications like healthcare, finance, and autonomous vehicles, properly identifying and handling edge cases is critical for reducing the risk of errors and improving model performance.

Understanding Data Edge Cases

In AI data, edge cases are Human Annotation that lie at the outer limits or extreme ends of the data distribution. It also refers to the scenarios where data is at the boundaries of what an AI model has been trained to handle.

These data points are often rare and may represent unusual or unexpected situations not well-represented in the training data. Edge cases are typically harder to classify accurately and may be more prone to errors than other data points, especially if they are not adequately addressed during model training.

Edge case examples in AI data include outliers, rare events, anomalies, and extreme values. For instance, in image annotation services, edge cases may include images with poor lighting, unusual angles, or obscured objects.

In natural language processing, edge cases may include unusual sentence structures, dialects, or rare words. Data not considered during training can be an edge case for any AI model.

The Impact Of Edge Cases On AI Performance

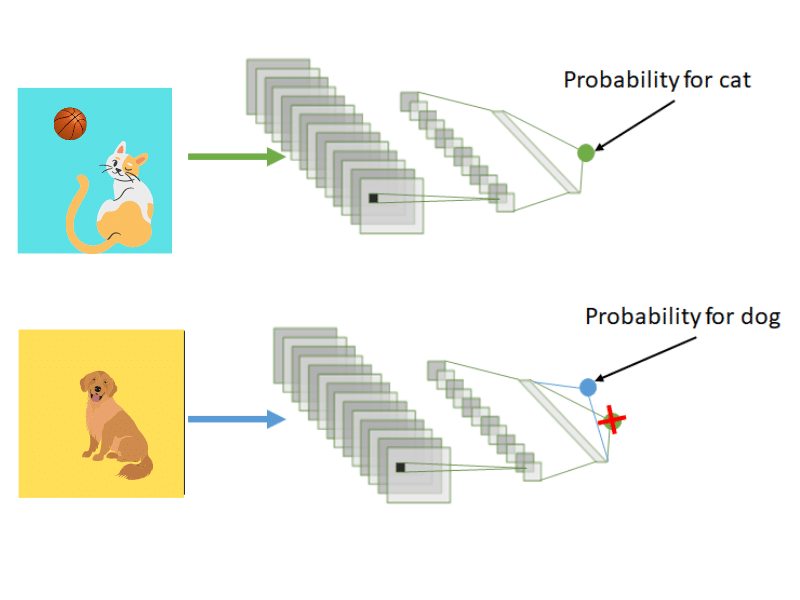

Ignoring edge cases in AI data can decrease accuracy, robustness, and ethical implications. Suppose edge cases are not considered during model training. In that case, the resulting model may be biased towards the more common cases in the data, leading to poor performance on new and unexpected data points.

Edge cases may also represent situations not well-represented in the training data. If these cases are not addressed, the resulting model may be more prone to errors and less effective at handling real-world scenarios. Moreover, failure to account for edge cases may result in biased models that unfairly discriminate against certain groups of people or fail to provide accurate predictions for certain types of data.

On the other hand, addressing edge cases in AI data has several positive effects on machine learning models giving the importance of having a reputable data annotation service help with the annotation and training of the model.

Suppose edge cases are taken into account during model training. In that case, the resulting model may be less biased towards the more common cases in the data and can lead to improved accuracy and robustness, making the model better equipped to handle new and unexpected data points.

Proper accounting for edge cases can also have ethical implications, leading to more equitable and unbiased models. By addressing edge cases, machine learning models can provide more reliable predictions and be more effective in real-world scenarios.

Strategies For Solving Data Edge Cases

Edge data solution is crucial for creating effective and reliable AI models. Here are some strategies that can be used to solve data edge cases:

-

Data Augmentation Techniques

Data augmentation techniques involve creating new training data by applying various transformations to the existing data. This has proven to improve AI models’ ability to handle edge cases.

By creating new training data through multiple transformations applied to existing data, the model can learn to recognize different variations of the same object or situation, making it more robust and adaptable to handling edge cases.

For example, in image classification tasks, data augmentation techniques can include random cropping, rotating, or flipping the images or using any of the different bounding box services.

Additionally, data augmentation techniques can help increase the diversity of the training data, reducing the risk of overfitting and improving the model’s generalizability.

-

Transfer Learning

Transfer learning involves using a pre-trained model as a starting point for a new task. This can be particularly useful when the new task has limited data or when the new task is similar to the one the pre-trained model trained initially on.

By leveraging the knowledge already embedded in the pre-trained model, the model can learn more quickly and accurately, even when faced with edge cases that may not have been encountered during the pre-training stage.

This is because the pre-trained model has already learned to recognize common patterns and features in the data, which can be beneficial in identifying relevant patterns in new data.

Transfer learning can also reduce the data needed for training, as the model can often achieve good results with fewer examples than a model trained from scratch.

-

Active Learning

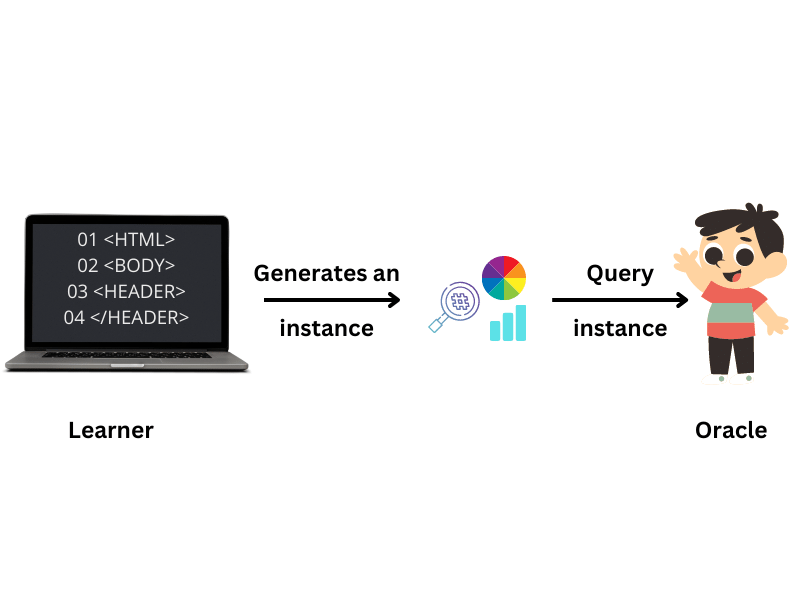

Active learning is a machine learning technique that involves selecting the most informative data points for labeling by a human expert and using those labeled examples to train the model.

This can be particularly useful when limited labeled data is available or when the edge cases are particularly challenging for the model to classify.

By selectively labeling the most informative data points, active learning can reduce the amount of manual labeling required, leading to faster and more efficient model training. This approach can be particularly useful in real-world applications where manual labeling can be time-consuming and expensive.

-

Human-In-The-loop Approaches

Human-in-the-loop approaches involve the active participation of humans in the AI model training process to help identify and label edge cases.

This can include annotating video data, verifying model outputs, or providing feedback to the model. This approach has several benefits, such as better handling of edge cases and the creation of more reliable and trustworthy AI systems.

Human-in-the-loop approaches are particularly useful when dealing with complex, subjective, or rapidly changing data domains where machine learning models may struggle to generalize accurately.

Moreover, incorporating human feedback can also help reduce bias and ensure that AI systems align with ethical and social considerations.

Conclusion

As we have discussed in this article, solving data edge cases is crucial for the success of AI. Edge cases can significantly impact AI performance, and ignoring them can lead to decreased accuracy and reliability and potential safety issues.

By incorporating edge cases in the training data and using strategies such as data augmentation, transfer learning, active learning, and human-in-the-loop approaches, AI models can become more robust and perform better in real-world scenarios. Further research is needed to develop more effective strategies for solving data edge cases and improving the performance and safety of AI.

Exploring the Art of Visual Text Labeling is key in this context. Enhanced annotation techniques can improve the quality of AI training datasets, leading to more reliable and robust machine learning models. This advancement will ensure better handling of edge cases, contributing to enhanced performance and safety in AI applications.

- What is Satellite Imagery? Tap the Power of Seeing from Above - June 12, 2025

- A Deep Dive into the Meaning of Handwritten Text Recognition with OCR - February 27, 2025

- How Text Annotation Adds Depth to Reading - April 4, 2024